CogSieve model vs minesweeper

This post is not intended for the development of the algorithm for solving minesweeper. The purpose is to illustrate how the CogSieve model of intelligence and an agent guided by software based on that model may approach a game of moderate complexity such as minesweeper. Just as well we could use the Tic-Tac-Toe or some other game for this purpose.

Rules of minesweeper

Rules of any game are expressed using objects such as board, squares, mines, moves, and actions like moves (we hope that the difference between a move and making one is clear, though we are not sure if it will be useful later). The CogSieve model stresses the importance of properties. Therefore, we want to extract them from the rules of the minesweeper.

The first piece to analyze is a square. Each square will have two parameters - x and y, respective coordinates. It is important that the property "x-coordinate" has limits and those may be different from the limits of the "y-coordinate". The status of each square is another property. It may be "covered", "flagged", or "uncovered". In the latter case, one more property gets initialized - the number of adjacent mines. It is a value from the range [0, 8]. That value is meaningless without the information about adjacent squares of a given square. A set of adjacent squares is another property of a square. In a simple case, the coordinates of a square may help to populate this set. Later, we will address other properties of squares like subsets of adjacent squares.

We need to track also remaining mines and remaining covered squares. For the latter, we may consider subsets. We will discuss their use later.

Let's now consider the board. Usually, it is rectangular. But the shape can be different. The only thing that is important is how we handle the coordinates of individual squares and form the sets of their adjacent squares. In this post, we will stick to the rectangular shape.

At this moment, it is important to address how our agent will learn the rules from the text description. Obviously, by the time it will learn about minesweeper, it should know about games (and other applications) and their typical components. Boards with squares are used in many games. The concept of adjacency of squares is important in the "fill" algorithm of graphics editors. It should not be difficult for our agent to relate the rules of the minesweeper to the agent's existing knowledge.

The core algorithm of intelligence, according to the CogSieve model, is based on the set intersection operation. In this game, sets and operations with them are heavily used. In a way, this game is a very limited demonstration of how intelligence works.

One more aspect related to the rules and AI is how our agent will handle minor modifications of rules. Will it require complete retraining in that case? Humans perform minor adjustments, which don't take long. We will consider this issue later.

First and later guesses

The first move in the game is a guess. Our agent should know the basic elements of the probability theory by then. Moves in the game of minesweeper are of two broad types - guesses and confident moves. The latter involves two types of moves - flagging a mine and probing a covered square. Guesses are always probing. The difference between guesses and confident moves is in certainty about the move outcome in advance. For confident moves, we expect that the square being probed does not contain a mine or that the square being flagged contains one. This is related to the problem of consistency. We expect that the information received from the game is correct. How to treat a buggy or purposefully distorted game is not considered in this post. If our agent receives information that contradicts the rules, it may decide not to continue the game after displaying an error message.

Guesses in minesweeper are of two types - global and local. Local guesses are about a set of adjacent covered squares of one square.

Global guesses refer to all the remaining covered squares.

Before the first game, our agent will not be aware of local guesses. It will find out about them after playing several games.

While contemplating the first move, the agent will consider several factors/metrics that may be used later. The first such factor is the probability of probing a square with no mines around. Many versions of minesweeper, in such cases, automatically probe adjacent squares in the BFS fashion until no more such squares are found. If mines are distributed on the board randomly, then this probability is lowest in corner squares, higher along the edges of the board, and highest for internal squares.

But there is one more factor - we may call it "maximum white spot" exploration. There can be no guarantees with guessing moves but if they succeed then we may want to have at least a promise of the biggest effect.

The agent may come up with different metrics, test them, and keep or discard them based on the results.

Approach to patterns

If at any stage of a game, we have a possibility to make a confident move, be it flagging a mine or probing a covered square, such a move for obvious reasons has a priority over any guessing moves. And we continue making such moves until there are any. So let's analyze how our agent can approach reasoning about confident moves.

Experienced human players have a lot of patterns, which allow them to make confident moves without thinking, automatically. When we encounter patterns for the first time, we spend more time figuring them out. But later on, we learn to recognize them and bypass thinking - no need to do it twice.

We argue that those patterns can be recognized by our agent using properties of squares, which we already discussed. We may recall that each square has such properties as the set of adjacent squares and the number of mines in those squares. We also need to consider subsets - covered adjacent squares and their intersections. The important property of a set is its size - it is measurable and comparable. Because of those properties, the agent will be able to reason about the number of mines in adjacent squares.

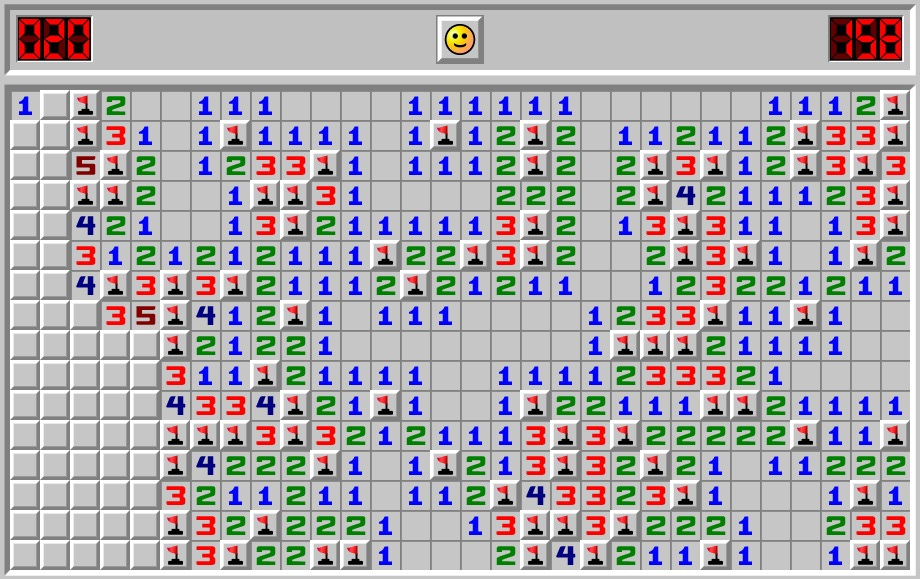

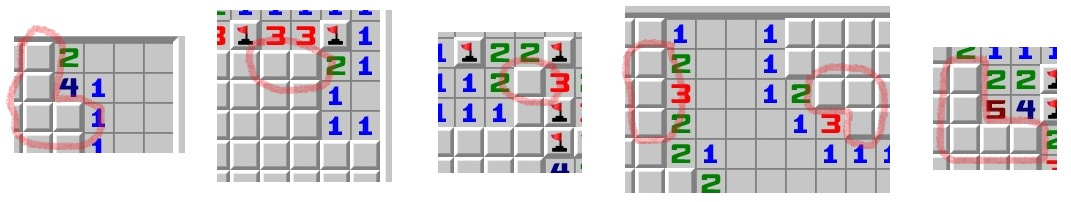

The easiest form of pattern is represented by situations when the number of adjacent covered squares corresponds to the number of mines adjacent to that square. The variant of this pattern is when some mines are already flagged near the square but the difference can only be located in the adjacent covered squares. The pictures below illustrate such cases.

In the above cases, the agent may confidently flag corresponding squares.

The opposite situation is when there are covered squares adjacent to a square but the required number of mines have been already flagged. In such cases, the agent may confidently probe the remaining covered squares. An example is shown in the picture below.

Now we will consider more complicated situations. These will involve information from several squares. Consider the picture below.

The above square with '2' on it has 3 covered adjacent squares, which contain 2 mines. The square with '1' on it immediately above the previously considered square also has 3 covered adjacent squares, which contain only 1 mine. Considering each square separately, we cannot confidently determine where to probe or flag confidently. Let's consider these squares together and intersect their adjacent covered squares. The intersection has two covered squares. And we can confidently say that in order to satisfy the requirement of the '1' square, those two covered squares contain no more than 1 mine. But three adjacent squares of the '2' square contain 2 mines! Where is the second one? There is only one square outside that intersection and it should contain a mine. The agent may confidently flag that square. Immediately after that, the below '1' square will have two covered adjacent squares and one adjacent flagged mine. Therefore, according to the previous pattern, our agent may confidently probe those covered squares. This will create a similar situation for the '2' square, and then for the '1' square, and our agent will be able to confidently flag a mine first and then probe a square.

Consider one more example of this kind.

The middle square with '1' on it has two covered adjacent squares with 1 mine in any of them. These two covered squares are also adjacent to the '1' square immediately to the right of the first considered square. Since, our agent 'knows' in which subset of covered adjacent squares the only mine is located, then the other covered squares can be confidently probed.

The above logic can only work when the numbers the agent uses are consistent. In order to check for consistency, we suggest that our agent should double-check that there are no contradictions in all the numbers reported by the game engine.

Endgame

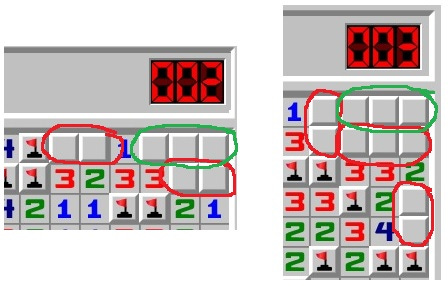

Closer to the endgame, it becomes possible to determine the smallest guaranteed non-overlapping sets of covered squares (circled with red below) containing a known number of mines each and compare their total to the total number of remaining mines. If two numbers match, we may safely probe the other covered squares (circled with green).

When the number of remaining mines is small, there may be even situations when it will be easy to determine which positions of remaining mines are the only possible to match the observed numbers on the board. In such cases, it may be necessary to look for overlapping sets. In the picture below, if only one mine is left, it can only be in the square below ‘4’. If three mines are left, that one square will be the only one safe to probe.

One more example is shown below.

Consider the common adjacent covered square for '5' and '4' in the second column. If it contains a mine then we do not have enough squares to place the remaining mines given the restrictions. Therefore, it is safe to probe that square. This kind of logic only works in the endgame. If the number of remaining mines was different we would make a different conclusion about that square or the consistency of the game.

Consider the following picture.

Deciding whether this square contains a mine or not is impossible until the very end when the agent can confidently assign the remaining mines to some subsets of the remaining covered squares. Simply checking the balance, the agent will make the decision whether to flag this square or probe.

Updated rules and inconsistencies

Imagine if we change the rules of the minesweeper and now mines can be placed in pairs horizontally or vertically but not diagonally. Will we have to retrain our agent completely? First, the agent is not trained as a neural network or any other machine learning model. It uses its knowledge from different areas to come up with solutions to current problems. In this case, it will not change its patterns, but each time any confidently flagged mine is uncovered from three sides the fourth side will be flagged immediately.

Imagine another update to the rules - now pairs cannot touch. In this case, all the squares around a confidently discovered pair will be confidently probed. Again, all the previous knowledge is in use, no retraining, just a little update.

Imagine now that there are bugs in the minesweeper application and numbers sometimes don't make sense. Most likely it may show a bigger number of adjacent mines for some squares than the number of covered adjacent squares. Any other inconsistencies can also be tracked. For example, once revealed numbers on squares cannot change. If they do it will be recognized as inconsistency.

Concluding remarks

Some variants of minesweeper are played with a timer and the record time is recorded and considered as a metric for one's level in the game. Because of that, some players try to micro-optimize their moves and rely on known patterns to save time. It is obvious that the agent will understand this concept of time records but it is difficult to predict what kind of optimizations it will consider and test. Note that the agent may run some analysis after each game to prepare new approaches to test. For example, it may try to treat patterns differently and take into account the coordinates of adjacent uncovered squares. If that approach leads to faster play it will keep it in its set of tricks.

Humans may blunder and make mistakes out of miscalculation. These kinds of mistakes will hardly be possible for our agent. Instead, it may experiment with guesses and metrics used to select the best square to probe. Also, it may try to "probe" hidden restrictions on randomness used by the game engine. Even if there are no such restrictions, our agent may be "suspicious". One piece of knowledge that it should have is that "it does not hurt to generate and test hypotheses".

Minesweepers can make only one mistake. Players are different. Our agent will analyze its own mistakes to see if there is any opportunity for improvement. Your comments may well be a similar opportunity to improve the CogSieve model of intelligence.