Is Generalization about similarities or differences?

If you are new to this blog I will give it away immediately - I advocate the idea that generalization is based on differences. Details follow.

However, I will not ignore the opposite opinion, according to which generalization is based on similarities. This camp has many supporters and it looks like it is even the most widely accepted view. At least, it is reflected in definitions of generalization given in respectful dictionaries.

To illustrate the importance of generalization, consider the following quote from Georg Wilhelm Friedrich Hegel, "An idea is always a generalization, and generalization is a property of thinking. To generalize means to think." The article "GENERALIZING IS NECESSARY OR EVEN UNAVOIDABLE" by Michael F. Otte, Tânia M. Mendonça, and Luiz de Barros mentions, "A characteristic of mathematical thought is, says Peirce 'that it can have no success where it cannot generalize'. And in a similar vein Poincaré states that 'there is no science but the science of the general'."

Is it about Similarities?

So, how does generalization work? Wikipedia quotes "Definition of generalization | Dictionary.com", "A generalization is a form of abstraction whereby common properties of specific instances are formulated as general concepts or claims."

Another quote from Wikipedia mentions similarity, "Generalization is the concept that humans and animals use past learning in present situations of learning if the conditions in the situations are regarded as similar." This claim is based on the following book:

Gluck, Mark A.; Mercado, Eduardo; Myers, Catherine E. (2011). Learning and memory : from brain to behavior (2nd ed.). New York: Worth Publishers. p. 209. ISBN 9781429240147.

The article "GENERALIZING IS NECESSARY OR EVEN UNAVOIDABLE", mentioned above claims, "So we must create new concepts and ideas, or ideal objects. To generalize means just this, introduce new ideal objects."

To understand why the above suggestion is wrong, consider first a cute kitten. House cats (felis catus) generalize to the family Felidae, to which pumas, tigers, and lions belong. Immediately we see no ideal objects and more differences. If we generalize further to animals and animate objects, the "ideal" will become blurred.

Now, let's proceed to the entry on "Vagueness" in the Stanford Encyclopedia of Philosophy. The key phrase there, in my opinion, is "Where there is no perceived need for a decision, criteria are left undeveloped." It is by the introduction of differentiating factors that we start specializing in subclasses. The opposite process is exactly generalization. Note that it does not consider intra-class similarities, but inter-class differences. Intra-class similarities, if you ask me, are a residual benefit, not the initial intention.

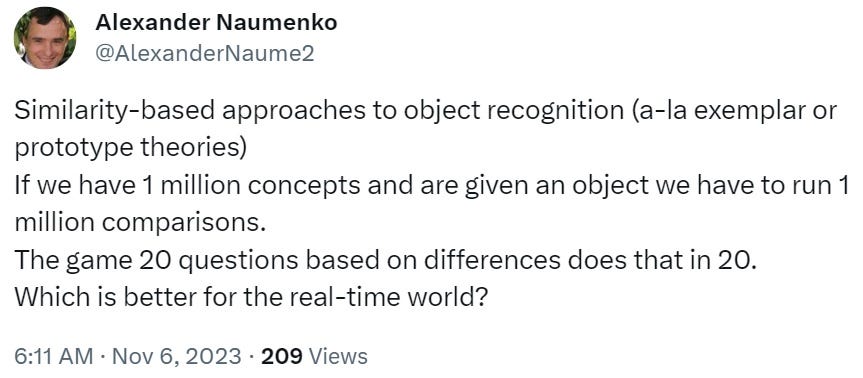

A Lesson from Object Recognition

Similarities figure in theories of exemplars and prototypes that try to explain how we recognize objects. There is a problem with those theories if they consider intra-class similarities. Any unknown object, to be recognized, needs to be compared to all the concepts, known to a person. Those theories do not consider how concepts are related to each other and they do not exploit those relationships.

Now, consider the following wonderful quote from David Chalmers.

There is some hierarchical organization of concepts. You may imagine a tree. Each node in that tree is a concept. There are differentiating factors that divide that concept into subclasses. The division is based on comparable properties with ranges. I claim that words of natural languages refer to those ranges. Hence, the need for a new breed of dictionaries, which will reflect the use of comparable properties, ranges, and differentiating factors in the concept formation process. Not only will such dictionaries enable better automated translation and natural language understanding by machines but they will help us in understanding our cognitive processes.

Is it about Differences?

Reading the title of Deleuze's "Difference and Repetition", one may think so. However, the following quote suggests that Deleuze failed to recognize all the benefits of differences, "Resemblances are unpacked in order to discover an equality which allows the identification of a phenomenon under the particular conditions of an experiment". No worries, he was not our only hope.

Jorge Luis Borges hints at the role of differences directly, "To think is to forget a difference, to generalize, to abstract." In my opinion, subclasses are introduced by introducing differentiating factors among instances of the parent class. When we forget those differentiating factors, we generalize from subclasses to the parent class. There is more to it - as we can differentiate the parent class using different properties, we may have different paths for generalization. This is reflected in the article "Learning How to Generalize" by Joseph L. Austerweil, Sophia Sanborn, Thomas L. Griffiths, which states, "how people generalize varies in complex ways depending on the context or domain".

The book "Signs of Difference" by Susan Gal and Judith T. Irvine mentions, "For many linguists, the whole that is "a language" represents not a standard, but a generalization over variation."

The article "Models that allow us to perceive the world more accurately also allow us to remember past events more accurately via differentiation" by Asli Kiliç, Amy H. Criss, Kenneth J. Malmberg, Richard M. Shiffrin discusses how differentiation contributes to the workings of perception and memory.

The article "Generalization and induction: Misconceptions, clarifications and a classification of induction" by Eric W. K. TSANG and John N. WILLIAMS mentions, "One might naturally think of the movement from observation of particular phenomena to something more general as terminating not in a conclusion, but rather the formation of a concept (in other words, a notion) of X, the possession of which is just one’s ability to reliably distinguish Xs from non-Xs." The authors did a great job to enhance our understanding of generalization. The article is amazing - a must read!

But I am cautious about the use of formal logic and truth with respect to natural languages.

Language is about sharing information about some context, be it a real or fictional event, chemical reactions or algebraic derivations. We can combine context at will, for example, we can talk about a professor who mentioned a Star Wars scene in a lecture and proceeded to the explanation of chemical reactions. Come on, everyone loves Star Wars. I know.

Exclamations deliver information about our attitude. Commands deliver requests to perform actions. Declarative sentences deliver facts. In terms of constituents, they inform us about (Who or What) (did What) (to Whom or to What) Where, When, How.

To understand the importance of questions imagine two interlocutors - one knows all those components, the other knows all but one - any one. The latter may ask a question about any constituent to fill in the white spot in one's memory. The question will contain keys to a fitting answer, using those keys the first interlocutor will find the answer in that person's memory.

Language is about so much more than mere truth conditions!

A Lesson from the Game "Bulls and Cows"

The article "Generalization and induction: Misconceptions, clarifications and a classification of induction" is interested in moving from "particular phenomena to something more general". Natural languages reflect what we already organized during the millennia of cultural progress. What about digesting new information and acquiring novel knowledge?

We have already discussed how the game 20 Questions illustrates the workings of intelligence. Now, let's consider another game, the Bulls and Cows (https://en.wikipedia.org/wiki/Bulls_and_Cows). Believe me, this game is worth your attention, for it was researched by such giants like Donald Knuth and Dennis Ritchie.

We are interested in optimizing the second move of this game. The answer to our first move already filters out some of the total 5040 possible combinations. We may program a computer to go over all possible combinations for our second move to find such a combination that will ensure what? Filtering out most of the remaining combinations? Not really. There may be various responses to the second move. Each response will filter out different numbers of options leaving the player with a certain number of options to go through. Consider such numbers for each response. The optimal second move will achieve the lowest highest number among them - a minimax strategy.

Note that the second move may not contain digits from the first move. The second move may even be from those options that were filtered out by the first move. The optimal move has something to do, if you ask me, with orthogonal probes or triangulation, it is hard to explain. We are collecting information that will allow us to exclude as many options for each digit as possible.

Something similar (you see, I am not afraid of using that word) we may want while processing "particular phenomena" in the search for general clues.

***

In general, that is all about generalization except ...